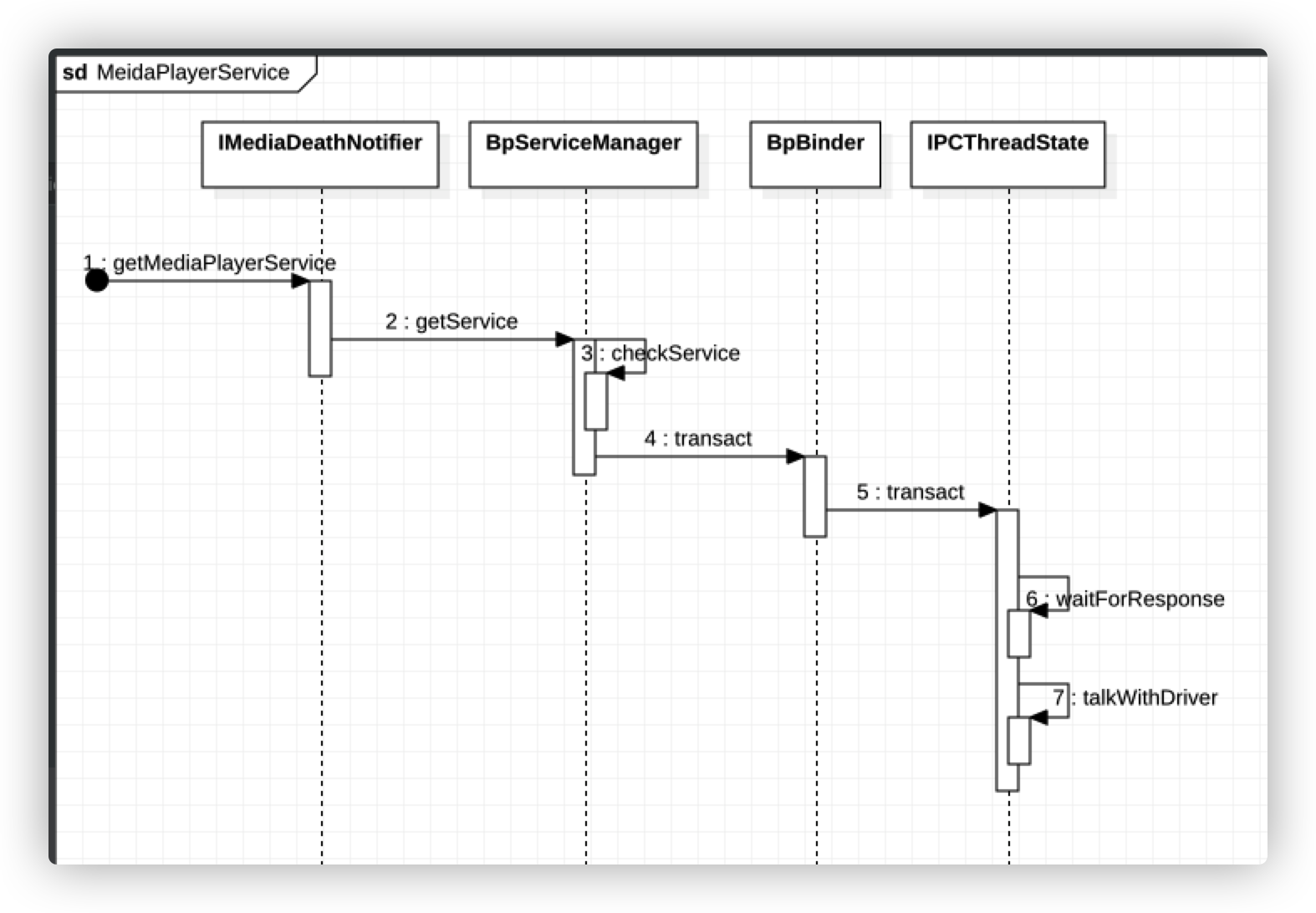

继续以MediaPlayerService为例,学习系统服务的获取过程。该过程的内容分为两部分,客户端MediaPlayerService请求获取服务和服务端ServiceManager处理请求。

想要获取MediaPlayerService,想必要先和ServiceManager打交道,通过调用其getService函数获取对应Service的信息。

frameworks/av/media/libmedia/IMediaDeathNotifier.cpp

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 IMediaDeathNotifier::getMediaPlayerService () { ALOGV ("getMediaPlayerService" ); Mutex::Autolock _l(sServiceLock); if (sMediaPlayerService == 0 ) { sp<IServiceManager> sm = defaultServiceManager (); sp<IBinder> binder; do { binder = sm->getService (String16 ("media.player" )); if (binder != 0 ) { break ; } ALOGW ("Media player service not published, waiting..." ); usleep (500000 ); } while (true ); if (sDeathNotifier == NULL ) { sDeathNotifier = new DeathNotifier (); } binder->linkToDeath (sDeathNotifier); sMediaPlayerService = interface_cast<IMediaPlayerService>(binder); } ALOGE_IF (sMediaPlayerService == 0 , "no media player service!?" ); return sMediaPlayerService; }

defaultServiceManager函数返回的就是BpServiceManager,调用getService获取名为media.player的系统服务,其返回的binder就是BpBinder。由于MediaPlayerService可能还没有注册,那么久休眠0.5s后继续调用getService函数,知道获取对应的服务。再通过interface_cast函数用于将BpBinder转为BpMediaPlayerService。经过之前文章的分析,现在再看上面的代码是不是觉得很顺畅了。

1.1-getService

frameworks/native/libs/binder/IServiceManager.cpp::BpServiceManager

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 virtual sp<IBinder> getService (const String16& name) const sp<IBinder> svc = checkService (name); if (svc != NULL ) return svc; const bool isVendorService = strcmp (ProcessState::self ()->getDriverName ().c_str (), "/dev/vndbinder" ) == 0 ; const long timeout = uptimeMillis () + 5000 ; if (!gSystemBootCompleted && !isVendorService) { char bootCompleted[PROPERTY_VALUE_MAX]; property_get ("sys.boot_completed" , bootCompleted, "0" ); gSystemBootCompleted = strcmp (bootCompleted, "1" ) == 0 ? true : false ; } const long sleepTime = gSystemBootCompleted ? 1000 : 100 ; int n = 0 ; while (uptimeMillis () < timeout) { n++; if (isVendorService) { ALOGI ("Waiting for vendor service %s..." , String8 (name).string ()); CallStack stack (LOG_TAG) ; } else if (n%10 == 0 ) { ALOGI ("Waiting for service %s..." , String8 (name).string ()); } usleep (1000 *sleepTime); sp<IBinder> svc = checkService (name); if (svc != NULL ) return svc; } ALOGW ("Service %s didn't start. Returning NULL" , String8 (name).string ()); return NULL ; } virtual sp<IBinder> checkService ( const String16& name) const Parcel data, reply; data.writeInterfaceToken (IServiceManager::getInterfaceDescriptor ()); data.writeString16 (name); remote ()->transact (CHECK_SERVICE_TRANSACTION, data, &reply); return reply.readStrongBinder (); }

getService函数主要就是循环查询服务是否存在,如果不存在就继续查询。在查询服务时用到了checkService函数。在checkService函数内调用remote()也就是BpBinder的transact函数,传入数据数据包data,后续会将数据写入到data中。

frameworks/native/libs/binder/BpBinder.cpp

1 2 3 4 5 6 7 8 9 10 11 12 13 status_t BpBinder::transact ( uint32_t code, const Parcel& data, Parcel* reply, uint32_t flags) if (mAlive) { status_t status = IPCThreadState::self ()->transact ( mHandle, code, data, reply, flags); if (status == DEAD_OBJECT) mAlive = 0 ; return status; } return DEAD_OBJECT; }

现在再这里的代码,有种轻车熟路的感觉。调用IPCThreadState的transact函数:

frameworks/native/libs/binder/IPCThreadState.cpp

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 status_t IPCThreadState::transact (int32_t handle, uint32_t code, const Parcel& data, Parcel* reply, uint32_t flags) status_t err; flags |= TF_ACCEPT_FDS; err = writeTransactionData (BC_TRANSACTION, flags, handle, code, data, NULL ); if (err != NO_ERROR) { if (reply) reply->setError (err); return (mLastError = err); } if ((flags & TF_ONE_WAY) == 0 ) { #if 0 if (code == 4 ) { ALOGI (">>>>>> CALLING transaction 4" ); } else { ALOGI (">>>>>> CALLING transaction %d" , code); } #endif if (reply) { err = waitForResponse (reply); } else { Parcel fakeReply; err = waitForResponse (&fakeReply); } } } else { err = waitForResponse (NULL , NULL ); } return err; }

又是熟悉的先写入数据writeTransactionData,其中BC_TRANSACTION代表客户端向Binder驱动发送命令协议。

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 status_t IPCThreadState::writeTransactionData (int32_t cmd, uint32_t binderFlags, int32_t handle, uint32_t code, const Parcel& data, status_t * statusBuffer) binder_transaction_data tr; tr.target.ptr = 0 ; tr.target.handle = handle; tr.code = code; tr.flags = binderFlags; tr.cookie = 0 ; tr.sender_pid = 0 ; tr.sender_euid = 0 ; const status_t err = data.errorCheck (); if (err == NO_ERROR) { tr.data_size = data.ipcDataSize (); tr.data.ptr.buffer = data.ipcData (); tr.offsets_size = data.ipcObjectsCount ()*sizeof binder_size_t ); tr.data.ptr.offsets = data.ipcObjects (); } else if (statusBuffer) { tr.flags |= TF_STATUS_CODE; *statusBuffer = err; tr.data_size = sizeof status_t ); tr.data.ptr.buffer = reinterpret_cast <uintptr_t >(statusBuffer); tr.offsets_size = 0 ; tr.data.ptr.offsets = 0 ; } else { return } mOut.writeInt32 (cmd); mOut.write (&tr, sizeof return NO_ERROR; }

在writeTransactionData函数内把BC_TRANSACTION命令和binder_transaction_data结构体写入到mOut中,mOut是用来存放发往Binder驱动的数据。

接着看waitForResponse函数:

waitForResponse 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 status_t IPCThreadState::waitForResponse (Parcel *reply, status_t *acquireResult) uint32_t cmd; int32_t err; while (1 ) { if ((err=talkWithDriver ()) < NO_ERROR) break ; err = mIn.errorCheck (); if (err < NO_ERROR) break ; if (mIn.dataAvail () == 0 ) continue ; cmd = (uint32_t )mIn.readInt32 (); switch case BR_TRANSACTION_COMPLETE: if (!reply && !acquireResult) goto finish; break ; default : err = executeCommand (cmd); if (err != NO_ERROR) goto finish; break ; } } finish: if (err != NO_ERROR) { if (acquireResult) *acquireResult = err; if (reply) reply->setError (err); mLastError = err; } return err; }

先调用talkWithDriver函数,之前也是分析过的。

talkWithDriver 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 status_t IPCThreadState::talkWithDriver (bool doReceive) if (mProcess->mDriverFD <= 0 ) { return -EBADF; } binder_write_read bwr; const bool needRead = mIn.dataPosition () >= mIn.dataSize (); const size_t outAvail = (!doReceive || needRead) ? mOut.dataSize () : 0 ; bwr.write_size = outAvail; bwr.write_buffer = (uintptr_t )mOut.data (); if (doReceive && needRead) { bwr.read_size = mIn.dataCapacity (); bwr.read_buffer = (uintptr_t )mIn.data (); } else { bwr.read_size = 0 ; bwr.read_buffer = 0 ; } if ((bwr.write_size == 0 ) && (bwr.read_size == 0 )) return NO_ERROR; bwr.write_consumed = 0 ; bwr.read_consumed = 0 ; status_t err; do { IF_LOG_COMMANDS () { alog << "About to read/write, write size = " << mOut.dataSize () << endl; } #if defined(__ANDROID__) if (ioctl (mProcess->mDriverFD, BINDER_WRITE_READ, &bwr) >= 0 ) err = NO_ERROR; else err = -errno; #else err = INVALID_OPERATION; #endif if (mProcess->mDriverFD <= 0 ) { err = -EBADF; } IF_LOG_COMMANDS () { alog << "Finished read/write, write size = " << mOut.dataSize () << endl; } } while (err == -EINTR); return err; }

binder_write_read是和Binder驱动通信的结构体,把mOut的数据赋值给binder_write_read的write_buffer,把mIn的数据赋值给binder_write_read的read_buffer。最后还是通过ioctl函数和Binder驱动通信。

mIn用来接收来自Binder驱动的数据,

mOut用来存放发往Binder驱动的数据

上面的时序图简单的展示了客户端的流程。当MediaPlayerService向Binder驱动发送BC_TRANSATION命令后,Binder驱动会向ServiceManager发送BR_TRANSACTION命令,接下来我们看服务端ServiceManager是如何处理获取服务请求的。

服务端ServiceManager处理请求 在上篇文章 讲到到ServiceManager就是Binder机制的总管,所有进程间的通信都得通过它处理,通过binder_loop无线轮训来处理消息,再次回到binder_loop函数:

frameworks/native/cmds/servicemanager/binder.c

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 void binder_loop (struct binder_state *bs, binder_handler func) int res; struct binder_write_read bwr ; uint32_t readbuf[32 ]; bwr.write_size = 0 ; bwr.write_consumed = 0 ; bwr.write_buffer = 0 ; readbuf[0 ] = BC_ENTER_LOOPER; binder_write (bs, readbuf, sizeof uint32_t )); for (;;) { bwr.read_size = sizeof bwr.read_consumed = 0 ; bwr.read_buffer = (uintptr_t ) readbuf; res = ioctl (bs->fd, BINDER_WRITE_READ, &bwr); if (res < 0 ) { ALOGE ("binder_loop: ioctl failed (%s)\n" , strerror (errno)); break ; } res = binder_parse (bs, 0 , (uintptr_t ) readbuf, bwr.read_consumed, func); if (res == 0 ) { ALOGE ("binder_loop: unexpected reply?!\n" ); break ; } if (res < 0 ) { ALOGE ("binder_loop: io error %d %s\n" , res, strerror (errno)); break ; } } }

在内部无线循环的调用ioctl函数,其内部不断使用BINDER_WRITER_READ指令查询Binder驱动中是否有新的请求,如果有就交给binder_parse函数处理,如果没有当前线就就会在Binder驱动中睡眠。

frameworks/native/cmds/servicemanager/binder.c

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 int binder_parse (struct binder_state *bs, struct binder_io *bio, uintptr_t ptr, size_t size, binder_handler func) int r = 1 ; uintptr_t end = ptr + (uintptr_t ) size; while (ptr < end) { uint32_t cmd = *(uint32_t *) ptr; ptr += sizeof uint32_t ); #if TRACE fprintf (stderr,"%s:\n" , cmd_name (cmd)); #endif switch case BR_TRANSACTION: { struct binder_transaction_data *txn = if ((end - ptr) < sizeof ALOGE ("parse: txn too small!\n" ); return -1 ; } binder_dump_txn (txn); if (func) { unsigned rdata[256 /4 ]; struct binder_io msg ; struct binder_io reply ; int res; bio_init (&reply, rdata, sizeof 4 ); bio_init_from_txn (&msg, txn); res = func (bs, txn, &msg, &reply); if (txn->flags & TF_ONE_WAY) { binder_free_buffer (bs, txn->data.ptr.buffer); } else { binder_send_reply (bs, &reply, txn->data.ptr.buffer, res); } } ptr += sizeof break ; } } return r; }

binder_parse函数截取BR_TRANSACTION命令的部分,因为此时是ServiceManager处理Binder驱动发送过来的数据。其中func函数是通过参数一路透传过来的。它指向的是svcmgr_handler函数:

frameworks/native/cmds/servicemanager/service_manager.c

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 int svcmgr_handler (struct binder_state *bs, struct binder_transaction_data *txn, struct binder_io *msg, struct binder_io *reply) struct svcinfo *si ; uint16_t *s; size_t len; uint32_t handle; uint32_t strict_policy; int allow_isolated; uint32_t dumpsys_priority; strict_policy = bio_get_uint32 (msg); s = bio_get_string16 (msg, &len); switch case SVC_MGR_GET_SERVICE: case SVC_MGR_CHECK_SERVICE: s = bio_get_string16 (msg, &len); if (s == NULL ) { return -1 ; } handle = do_find_service (s, len, txn->sender_euid, txn->sender_pid); if (!handle) break ; bio_put_ref (reply, handle); return 0 ; case SVC_MGR_ADD_SERVICE: s = bio_get_string16 (msg, &len); if (s == NULL ) { return -1 ; } handle = bio_get_ref (msg); allow_isolated = bio_get_uint32 (msg) ? 1 : 0 ; dumpsys_priority = bio_get_uint32 (msg); if (do_add_service (bs, s, len, handle, txn->sender_euid, allow_isolated, dumpsys_priority, txn->sender_pid)) return -1 ; break ; case SVC_MGR_LIST_SERVICES: { uint32_t n = bio_get_uint32 (msg); uint32_t req_dumpsys_priority = bio_get_uint32 (msg); if (!svc_can_list (txn->sender_pid, txn->sender_euid)) { ALOGE ("list_service() uid=%d - PERMISSION DENIED\n" , txn->sender_euid); return -1 ; } si = svclist; while (si) { if (si->dumpsys_priority & req_dumpsys_priority) { if (n == 0 ) break ; n--; } si = si->next; } if (si) { bio_put_string16 (reply, si->name); return 0 ; } return -1 ; } default : ALOGE ("unknown code %d\n" , txn->code); return -1 ; } bio_put_uint32 (reply, 0 ); return 0 ; }

上篇文章中分析了ServiceManager注册服务过程走的是注意SVC_MGR_ADD_SERVICE这个case,而获取服务走的是SVC_MGR_GET_SERVICE这个case。最终会调用do_find_service函数:

frameworks/native/cmds/servicemanager/service_manager.c

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 uint32_t do_find_service (const uint16_t *s, size_t len, uid_t uid, pid_t spid) struct svcinfo *si =find_svc (s, len); if (!si || !si->handle) { return 0 ; } if (!si->allow_isolated) { uid_t appid = uid % AID_USER; if (appid >= AID_ISOLATED_START && appid <= AID_ISOLATED_END) { return 0 ; } } if (!svc_can_find (s, len, spid, uid)) { return 0 ; } return si->handle; }

内部先调用find_svc函数用于查询服务,返回svcinfo结构体,其内部包含了服务的handle值,最后会返回服务的handle值

frameworks/native/cmds/servicemanager/service_manager.c

1 2 3 4 5 6 7 8 9 10 11 12 struct svcinfo *find_svc (const uint16_t *s16, size_t len) struct svcinfo *si ; for (si = svclist; si; si = si->next) { if ((len == si->len) && !memcmp (s16, si->name, len * sizeof uint16_t ))) { return si; } } return NULL ; }

系统服务的注册流程中,在Kernel Binder 中调用do_add_service函数,其内部会将包含服务名称和handle值的svcinfo保存到svclist列表中。同样,获取服务的流程中,find_svc函数会先遍历svclist列表,根据服务名称查找到应用服务是否已注册,如果已经注册就会返回对应的svcinfo,如果没有就返回NULL。